Imagine waking up to headlines announcing that a household name, say, a major retailer or health service, has fallen victim to a cyber‑attack, exposing millions of personal records. In a world where data is gold, breaches are not just costly, they’re destabilising. Such incidents are becoming more frequent and increasingly sophisticated, fuelled by globally connected systems and the ever‑growing value of personal data. AI hallucinations pose significant challenges for applications requiring factual accuracy, such as medical diagnosis, legal research, or journalistic reporting, highlighting the importance of human oversight, fact-checking mechanisms, and the development of more reliable AI systems that can distinguish between what they know and what they’re uncertain about.

What is AI Hallucination?

AI hallucination refers to the phenomenon where artificial intelligence systems, particularly large language models and generative AI, produce information that appears plausible and coherent but is factually incorrect, misleading, or entirely fabricated. This occurs when AI models generate responses based on patterns learned during training rather than retrieving actual facts, essentially “filling in gaps” with convincing-sounding but inaccurate information. The term “hallucination” is borrowed from psychology, where it describes perceiving something that isn’t actually present, and in AI contexts, it manifests as the system confidently presenting false data, non-existent citations, made-up statistics, or fictional events as if they were real.

A data breach occurs when protected or sensitive information is accessed or disclosed without authorisation. In today’s hyper‑connected digital ecosystem, vast amounts of data flow continuously, giving cyber‑criminals abundant opportunities to slip through unchecked.

Enter artificial intelligence (AI): a transformative force that simultaneously acts as a powerful defender of data security and, paradoxically, as a new frontier of risk. This article explores how AI functions both as a robust shield and as a potential vulnerability in the battle for data privacy.

AI as a Shield: Preventing and Mitigating Breaches

Threat Detection & Prediction

AI and machine learning (ML) models excel at analysing vast swathes of network traffic and user behaviour in real time identifying anomalies that would bewilder traditional signature‑based defences. This enables early detection of suspicious activity, reducing response time and mitigating damage.

Automated Incident Response

Advanced AI‑powered systems can isolate compromised devices, block malicious IPs, or even shut down vulnerable network segments in milliseconds. Security Orchestration, Automation, and Response (SOAR) platforms now empower security teams to trigger rapid, automated responses buying precious time in a breach scenario.

Vulnerability Management

AI can proactively scan codebases and network configurations to flag weaknesses long before attackers exploit them. Through risk‑based prioritisation, AI helps security teams focus on the most critical vulnerabilities maximising impact even with limited resources.

This isn’t just theoretical. Reports suggest the rise of Agentic Identity and Security Platforms (AISP) as integral to enterprise security strategy particularly for managing AI agents themselves and safeguarding against threats like prompt injection and agent communications poisoning.

The Double-Edged Sword: AI-Driven Cyber Risks

Malicious actors are increasingly weaponising AI. Adversarial AI where attackers manipulate data to trick AI defence systems, is on the rise. Equally concerning is the proliferation of automated phishing campaigns, in which AI crafts highly convincing spear-phishing messages at scale.

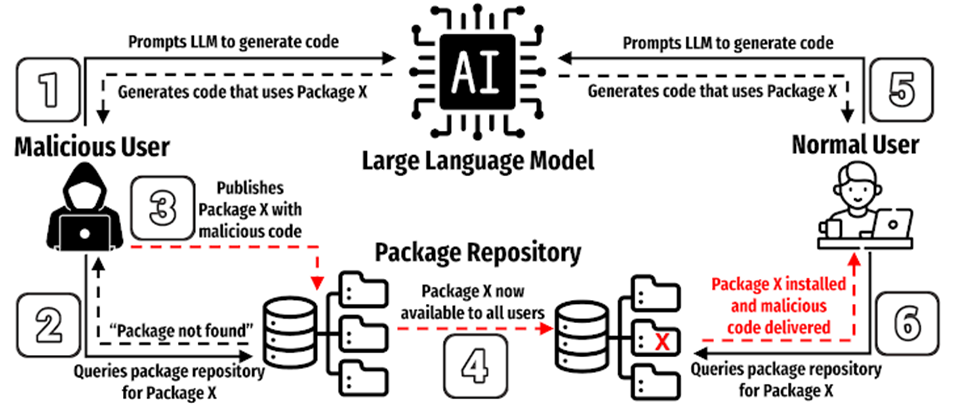

AI‑driven malware and bots now autonomously hunt for and exploit system vulnerabilities, adapting to security updates almost in real time. These tools are highly resilient and difficult to counter once unleashed. A study found that approximately 20% of code dependencies recommended by code‑generating LLMs were hallucinated and many repeated across prompts, creating opportunities for attackers to introduce malicious packages.

Privacy Concerns

AI systems thrive on data and lots of it. Often this includes sensitive personal information. Without proper safeguards, this data becomes vulnerable, risking misuse or leaks. Worse still, models may inadvertently replicate biases or privacy violations learned during training.

These concerns were front and centre at Infosecurity Europe 2025, which introduced an AI and cloud security theatre focusing on generative AI threats like deepfakes, automated phishing, and LLM exploitation.

Moreover, Europol has warned that AI is turbo‑charging organised crime from realistic deepfakes and voice cloning to sophisticated fraud and extortion threatening societal foundations across the EU.

The Human Element: Regulation, Ethics, and the Future of Privacy

The rapid pace of AI development has outstripped many legal frameworks. While the EU’s AI Act is now taking effect (enforcement began 2 August 2025), it remains unclear whether laws can keep pace with evolving threats.

When it comes to data breaches, EU institutions are required to notify the European Data Protection Supervisor (EDPS) within 72 hours of detection and, if risk to individuals is high, inform the data subjects promptly. In addition, guidelines on generative AI emphasise the need for Data Protection Impact Assessments (DPIAs), assessment of lawfulness, and clear roles for Data Protection Officers (DPOs).

Ethical Considerations

AI in security raises deep ethical questions: the potential for surveillance, algorithmic bias, and lack of transparency are among the concerns. Policymakers and technologists must ensure AI systems are fair, auditable, and accountable.

Academic research underscores the urgency of ethical and regulatory imperatives: a recent paper calls for harmonised global frameworks to balance innovation with oversight in AI‑driven cybersecurity. Another proposes a multi‑layer defence model blending AI detection with targeted policy measures to neutralise emerging “cyber shadows”.

A stark example of AI’s double-edged nature in data privacy emerged in April 2023 when Samsung employees accidentally leaked confidential company information by using ChatGPT to review internal code and documents. In three separate incidents within just 20 days, Samsung engineers inadvertently exposed sensitive data including proprietary source code, internal meeting recordings, and semiconductor equipment data by inputting them into the AI chatbot for assistance with debugging and optimization tasks.

This incident highlighted how employees’ well-intentioned use of generative AI tools to enhance productivity can inadvertently compromise corporate secrets, as the data entered into ChatGPT becomes part of OpenAI’s training dataset and could potentially be accessed by the company. The breach was so concerning that Samsung subsequently banned the use of generative AI tools across the entire company, illustrating how organizations are grappling with the tension between leveraging AI’s capabilities and protecting sensitive information. This incident underscores the critical need for robust AI governance policies and employee training to prevent similar inadvertent data exposures, as IBM’s 2025 Cost of a Data Breach Report revealed that 13% of organizations have already experienced breaches of AI models or applications, with 97% of those lacking proper AI access controls.

Leading Companies Using AI for Data Security

Several major players are pushing the boundaries of AI-driven cybersecurity. Their platforms illustrate how AI is shaping the modern defence landscape:

- Darktrace: Known for its “Enterprise Immune System,” Darktrace uses self-learning AI to detect anomalies in real time, modelling normal behaviour and flagging potential breaches before they escalate.

- CrowdStrike: Its Falcon platform integrates AI and ML to deliver endpoint protection, threat intelligence, and automated response across global networks.

- IBM Security (Watson for Cyber Security): Harnesses AI to sift through massive datasets, detecting advanced threats and providing actionable intelligence for human analysts.

- Microsoft Security (Defender + Sentinel): Uses AI to correlate billions of daily signals across email, cloud, endpoints, and identity systems to predict and mitigate attacks.

- Palo Alto Networks (Cortex XDR & Prisma AI): Employs AI for extended detection and response, integrating network, cloud, and endpoint data to stop sophisticated threats.

- Vectra AI: Specialises in AI-driven threat detection and response for hybrid and cloud environments, focusing on identifying hidden attacker behaviours.

- Fortinet (FortiAI): Uses deep learning to autonomously detect and respond to zero-day threats with minimal human intervention.

These companies demonstrate how AI is not just a theoretical concept but already at the core of real-world cybersecurity strategies, helping organisations safeguard sensitive data at scale

A Call for Vigilance and Collaboration

AI is a double-edged sword: it bolsters security through advanced detection, automation, and risk prioritisation, yet simultaneously introduces complex vulnerabilities from AI-powered attack tools and supply-chain threats to data privacy risks and regulatory lag.

AI hallucination represents one of the most pressing challenges at the intersection of artificial intelligence advancement and data privacy protection. As organisations increasingly integrate AI systems into their operations, the dual nature of these technologies becomes starkly apparent: whilst they offer unprecedented capabilities in threat detection, automated response, and vulnerability management, they simultaneously introduce new vectors for data exposure and security breaches. The Samsung incident exemplifies how even well-intentioned use of AI tools can lead to catastrophic data leaks, demonstrating that the very systems designed to enhance productivity and security can become conduits for confidential information exposure.

Cybersecurity will increasingly resemble an arms race: AI-powered defenders pitted against intelligent, adaptive attackers. Success depends on striking the right balance between innovation and caution. As we venture deeper into the AI era, safeguarding data privacy demands not just technological innovation, but also responsible regulation, ethical foresight, and collaboration across industry, government, and ethics bodies. Only by combining these strengths can we ensure AI remains a guardian, not a guarantor of a data-privacy crisis. Ultimately, we must all remain vigilant and understand how to react in the event of a data breach to protect our personal information and uphold data privacy.